Every now and then I want to check how a URL redirect, for instance when checking out why a domain failed loading in browsers a while ago because of certificate problems:

- [Wayback/Archive] SSL Server Test: vems-sassenheim.nl (Powered by Qualys SSL Labs)

- [Wayback/Archive] SSL Server Test: www.vems-sassenheim.nl (Powered by Qualys SSL Labs)

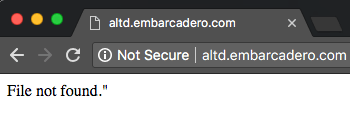

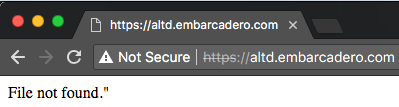

The thing was that back then, the site officially did not have a security certificate, but somehow the provider had installed a self-signed one. Most web-browsers then auto-redirect from http to https. Luckily the archival sites can archive without redirecting:

When querying [Wayback/Archive] redirect check – Google Search, you get quite some results. These are the ones I use most in descending order of preference and why they are at that position:

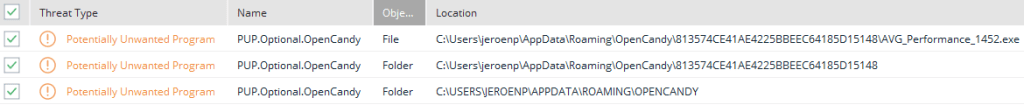

One of the domains not yet monitored at

One of the domains not yet monitored at